Subscribe to trusted local news

If you are accessing this story via Facebook but you are a subscriber then you will be unable to access the story. Facebook wants you to stay and read in the app and your login details are not shared with Facebook. If you experience problems with accessing the news but have subscribed, please contact subscriptions@thestrayferret.co.uk. In a time of both misinformation and too much information, quality journalism is more crucial than ever. By subscribing, you can help us get the story right.

- Subscription costs less than £1 a week with an annual plan.

Already a subscriber? Log in here.

13

Apr

Why you should be concerned about live facial recognition coming to North Yorkshire

Charlie Whelton is policy and campaigns officer at the human rights charity Liberty.

Facial recognition technology has been used by the police in the UK since 2015. Every force in the country is now using it in one form or another.

North Yorkshire Police has now said it wants to join the growing number of forces which also use what’s called ‘live facial recognition’.

This usually involves parking vans equipped with the technology on busy shopping streets or outside stadiums and train stations, scanning the hundreds of thousands of us who come within range of the police cameras on the vehicles’ roofs, and attempting to match our faces in real time to images on secretive watchlists.

In July 2025, the Metropolitan Police announced it will double its number of weekly live facial recognition deployments. And in January, the government announced a fivefold expansion of facial recognition vans from 10 to 50, allowing its use to spread across the country.

Some forces are going even further. South Wales Police and the Met in London have tested systems of fixed cameras in Cardiff and Croydon, and both forces have also been trialling facial recognition technology on officers’ phones, enabling them to scan and potentially instantly identify anyone they come into contact with.

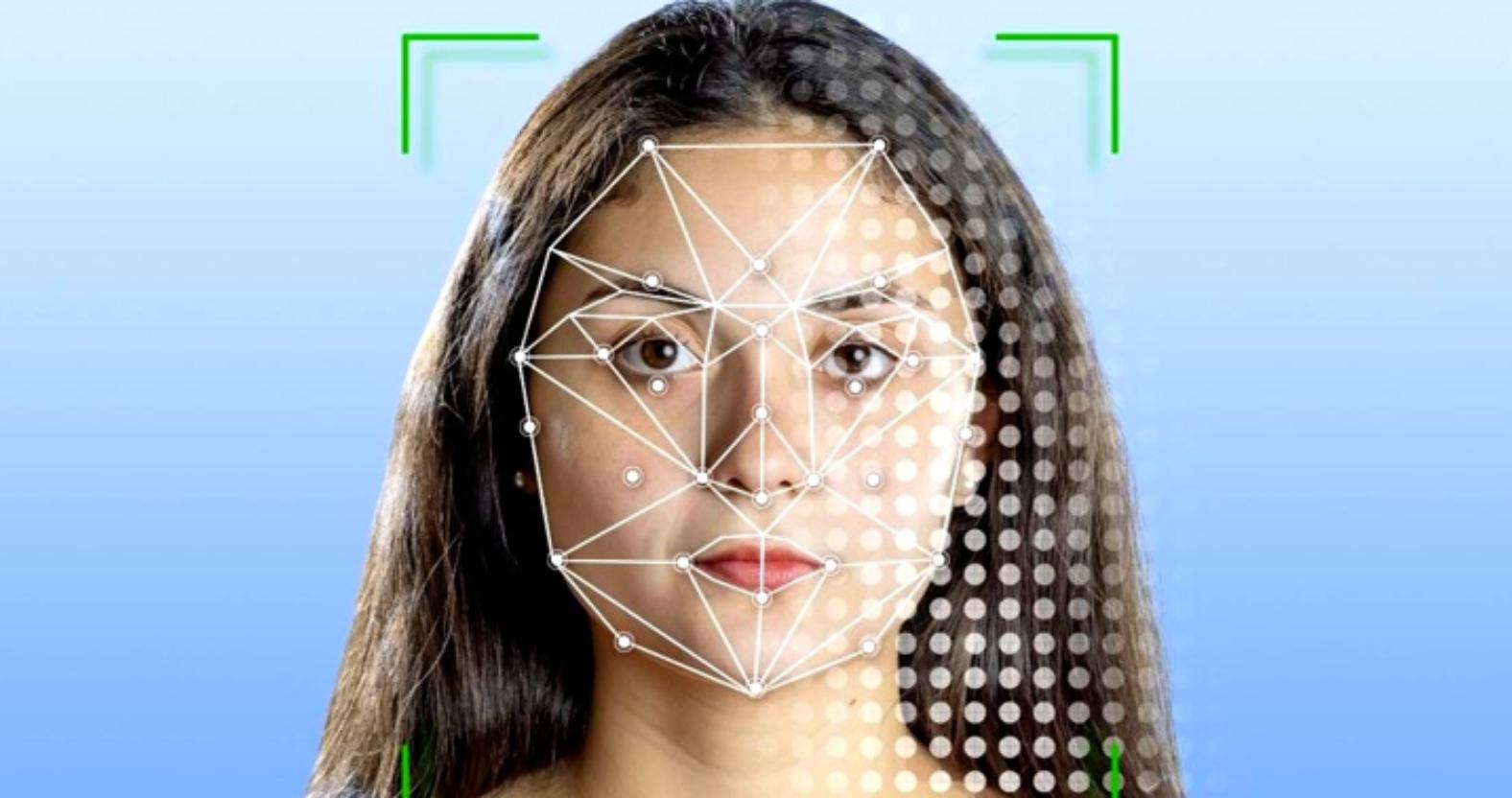

Stock image of facial recognition technology

While these are very visible uses of facial recognition technology, the majority of searches take place on computers in police stations. ‘Retrospective facial recognition’ is in use by all forces and allows for images of people the police want to identify to be checked against vast databases, usually of custody images but increasingly things like the passport database as well.

In December, the Home Office admitted that the retrospective facial recognition tool they’ve been using for these searches is dramatically racially biased, misidentifying Asian faces 100 times more often than white ones. This has likely contributed to cases like that of Alvi Choudhary, a British Asian man who was misidentified by facial recognition and arrested for a burglary in a city he’d never even been to. Shockingly, that racist facial recognition tool is still being used by police forces across the country.

North Yorkshire Police headquarters.

There is no dedicated law in place to govern the use of facial recognition technology by the police. We understand that work is going on in government to correct that and legislate in this important area, but while this work goes on, this rapid rollout of the technology should be halted. We need safeguards in place to protect each of us and prioritise our rights.

Facial recognition changes what it means to simply live our lives. We want to be able to see our favourite music artists on tour, go to the football, take road trips, and make memories with our loved ones safely without being monitored.

Facial recognition that can identify and track down all of us is dangerous tech – and it’s being used without any safeguards. And history tells us that surveillance tech will always be disproportionately used against communities of colour.

Other countries are putting laws in place to limit the dangerous effects of this technology. We need to see urgent action from the government to introduce safeguards to limit how the police can use facial recognition, and to protect all of us from abuse of power as we go about our daily lives.

0